As I visit hospital customers and regularly come into contact with the IT department of such an organization, I start to see some patterns for optimization.

One of these optimizations is improving the IT department processes, to fix, swap or give information for 1 of the many hardware tools they use in a hospital.

To create such a solution, I need a mobile client that can take pictures, a bot that can handle the first line support and create tickets, and something smart that can recognize specific hardware in a picture.

Recap, we need:

- A mobile application that can take pictures.

- A bot that handles the first line support and does ticket creation.

- Something smart that can recognize custom hardware based on an image.

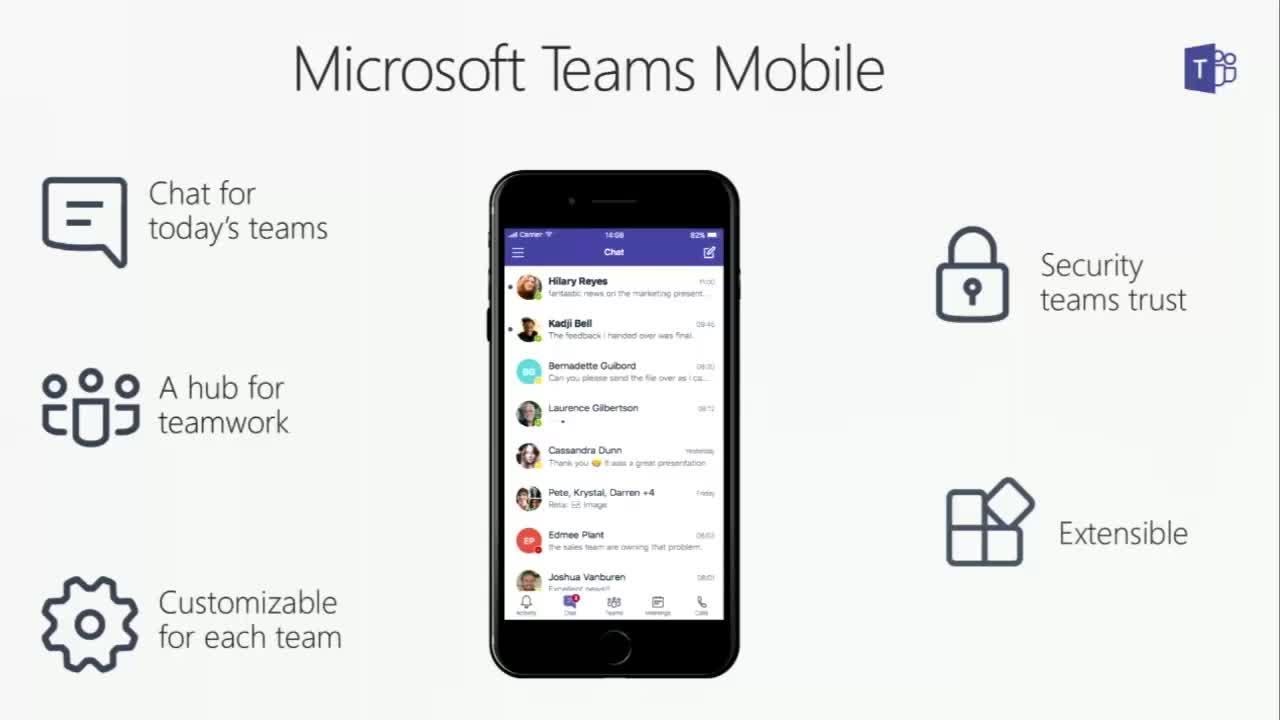

Because most of the hospitals use Office365 and have access to Teams, I already have a mobile application that can take pictures. this takes care of point 1!

The extra benefit of this is that we keep the amount of applications as low as possible and get as much functionality in one single application.

The second point is creating a bot that can guide the user with his or her problem in a conversational way and create followup tickets.

For the first version of the application, the users can choose between 3 options:

- My product is malfunctioning.

- I want to swap my hardware.

- What piece of hardware is this?

When a user asks to swap or fix his or her hardware, a ticket is being created in the back-end of the hospital system.

With the help of the Microsoft Bot Framework, I can develop my bot code once and distribute it via different channels, in this case, I choose Teams.

Now that I have my bot, connected to a mobile teams application, the last thing I need, is something smart that can recognize hardware based on an image.

Here I use the Custom Vision Service Api, with this, I can train the model to recognize hospital hardware, with only 6 training images, then I link this Service to my bot, so the bot can respond with the correct answer.

With this last piece, I created a working solution in less then 2 days, with the help of the Microsoft Bot Framework , Custom Vision Service and Teams.

I did not need to create a custom mobile application, I developed the bot code once and with the easy API access of the Custom Vision Service, I leveraged the power of the cloud to get the bot smart, without having to know anything about A.I.

A small overview of how the pieces are used:

And here you can see it in action:

Swap

Malfunction

Information